All published articles of this journal are available on ScienceDirect.

Development and Validation of Instrument to Measure Thinking Patterns: A Structural Equation Modeling Analysis

Abstract

Background:

As an instrument that measures thinking processes, the cognitive reflection test still has a number of problems, especially in terms of its validity and reliability.

Aims:

This research aimed to develop instruments to identify patterns of thinking that meet psychometric requirements.

Methods and Results:

Participants in the research were 727 students from the State University of Surabaya, including 322 (44%) men and 405 (56%) women with a mean age of 19.17 years. The first examination using exploratory factor analysis showed that the scale of thinking patterns, which we later called Intuitive-Reflective Scale (IRS), had a conceptual relations structure consisting of 5 factors with a loading factor of .40 - .80. The five factors explained 52.57% of the total variance and had Cronbach’s Alpha reliability of .71. The second examination using confirmatory factor analysis based on structural equation modeling proved that the IRS had factors structure that was consistent with the results of the first examination and was a significant predictor of academic performance.

Conclusion:

Hypothesized factor structure fits with empirical data based on the comparative fit index of .96 and root mean square error of approximation of .07.

1. INTRODUCTION

As an instrument for measuring thought processes, the Cognitive Reflection Test (CRT) has been widely used in a number of studies [1-3]. Unfortunately, as an instrument, CRT exhibited a number of problems. First, the CRT consisting of 3 items more closely resembles intelligence tests that measure thinking ability, and not individual thought patterns. Second, the CRT does not adequately reflect the attributes of a comprehensive thought process because all CRT items are numerically based and measure the same aspects. Good instruments reflect the characteristics of the attributes tobe measured [4-6]. Third, the data generated from the CRT

is in the form of right or wrong answers, which are nominal or ordinal. Such data quality has limitations to be used in statistical analysis, because it is not applicable to an additional calculation operation or ratio scale data [7, 8], as a result, inferential statistical analysis that is more advanced cannot be applied. Fourth, the CRT results proved to be influenced by respondents' familiarity with the questions [9-11]. That is, if the respondent has worked on the problem and already knows the logic of the problem, the CRT is no longer valid and effective for measuring the thought process. Fifth, the results of validity tests against CRTs are less encouraging, only valid for measuring reflective thinking and not intuitive [12] and their validity is low when repeated testing is carried out [1].

Many attempts have been made to overcome these weaknesses, for example, by Cheng and Janssen [11] and Thomson [10], who tried to compile a new item that they call CRT-2. However, it seems that these efforts cannot significantly eliminate the limitations of the methods. They will face problems that are more or less the same as seen in the first CRT. Therefore, the development of new instruments to measure thinking patterns becomes an urgent requirement. The instrument was developed based on dual-processing theory, namely system 1 and system 2 [13, 14], which were then linked and explored with neuroscience [15-18]. According to the dual-processing theory, it is assumed that system 1 is related to the limbic system, while system 2 is related to the neocortex.

Neuroscientists also discovered the fact that the behavior displayed by humans has a neuro basis in brain structure [16, 19]. Peters explained that the frontal area of the brain functions to make decisions based on rational reasons, including weighing, analyzing, and considering an action in depth from the good-bad and profit-loss aspects. People who think logically and deeply use a lot of frontal areas in their thought processes. In contrast to the frontal area, the limbic area functions to respond instinctively to emotional stimuli that tend to be rushed, impulsive, and not infrequently destructive. People, who respond to problems emotionally without thinking long, prejudice without data, and draw conclusions speculatively, use a lot of limbic areas in the thought process.

In psychology, the problem is actually not new, but has been studied since the 1970-1980s. Later this theme became phenomenal after Kahneman conducted an in-depth study of cognitive biases in decision making, especially relative to the economic behaviour of individuals [13]. Kahneman argues that to make a decision, humans generally use experience bias, and do not use their ratios optimally, and tend to discourage short-term interests. Consequently, many actions, as a result of the decision making process, are counterproductive and less effective in achieving goals. Kahneman uses the terms thinking fast and slow, which are the concepts derived from system 1 and system 2 [14-20]. Thinking fast is characterized by the decision-making process that is emotional, intuitive, automatic, lacks in-depth consideration, and is difficult to control over instinctive desires. Meanwhile, thinking slow is characterized by rational decision making, requires in-depth consideration, is relatively flexible, and adaptive to rules.

If an individual is accustomed to using intuitive thinking patterns in making decisions, then in the long run, his or her mind cannot function optimally and critical thinking habits will not be developed. Finally, individuals disregard the reference to understanding problems clearly, including solving problems in life. This situation is not only unfavorable but also counterproductive to progress. In the reality of everyday life, indications of the use of intuitive thinking patterns still occur in many societies. For example, many people are exposed to fraud under the guise of investment plans , which obviously makes no sense. The abundance of information that is often called “big data” is apparently not directly proportional to the intelligence of individuals in acting and making decisions [21, 22]. What emerges is precisely the prevailing irrationality marked by the loss of rational reasoning and the lack of awareness of the importance of the verification process before coming to conclusions. People become rushed, spontaneous, instant, and are impulsive without thinking about the truth and dignity that underly it.

For education, the thinking patterns are closely related to the process and learning outcomes [23-25]. Learning is not just meant as an effort to get an academic score, but rather as an effort to build a civilization that educates life [26, 27]. Information as a source of learning can come from classroom activities such as teaching materials, explanations from lecturers, and communication between students through a discussion process, and can also spread via the public cyberspace such as the internet and social media. The research on thinking patterns becomes urgent in the current post-truth era, when people tend to accept truth based on their emotions and beliefs rather than based on the proven facts [21, 22]. The truth seems not to be determined by data and facts, but by individual perceptions and beliefs. If the facts are in line with their beliefs, they are convinced of the truth without their verification [28]. This condition is amplified by VUCA (Volatility, Uncertainty, Complexity, Ambiguity).

Thus, the thinking patterns become a crucial problem in building civilization and are a fundamental problem in education. The question is how to identify thinking patterns that meet psychometric eligibility [6, 29]. So far, two common instruments are used by researchers, namely the Cognitive Reflection Test – CRT [9, 30] and self-reports such as Thinking Styles Inventory – TSI [31, 32]. The first instrument is more like an intelligence test that measures an individual's cognitive abilities, rather than revealing thinking patterns preferred by the individual or habits of thinking [1]. While the second instrument may identify a person's thinking preferences, but after being tested on a broader scale, many of the items are underrated. Out of a total of 104 items, only 32 have psychometric eligibility [33]. It seems that the theoretical basis used, namely mental self-government, which considers the brain mechanism as a government organization less relevant. More or less the same situation happened in the Rational Experiential Inventory - REI [20], the development of which is itself based on Cognitive Experiential Self-Theory (CEST). Thus, the development of thinking pattern instruments is still open to concern.

Identifying thinking patterns is an important part of the process of improving thinking performance in education. Unfortunately, until now, there is no instrument that can be used to identify patterns of thinking adequately. Therefore, developing instruments that include psychometric requirements is a necessity task. This research aims to develop a thinking pattern instrument that meets psychometric requirements, namely validity and reliability. If the purpose of this research could be achieved, as a result, a new model that adequately describes the thinking patterns in students could be implemented by the researcher and lectures to improve student education. As educators, we will not be able to improve students' thinking if we are unable to find the relevant thinking patterns. Error thinking, in turn, will have an impact on errors in behavior. Thus, the results of this research are very useful for making an intelligent civilization in a constructive and progressive manner.

2. STUDY I

2.1. Methods

At this stage, the research aims to compile the items developed based on dual-processing theory and test them with exploratory factor analysis in order to find the conceptual structure and loading factors of each item. Participants at this stage were 310 students from the Faculty of Sports Science, State University of Surabaya, consisting of 196 men and 114 women, with a mean age of 19.13 ±1.02 years.

In developing the instrument, there are five steps that need to be done [29], namely (1) conceptualization, (2) compilation of items and scales, (3) testing, (4) analysis of items, which includes testing of validity and reliability, and (5) final revision and format. The instrument was developed based on the process of cognition commonly referred to as system 1 (i.e. limbic-related system) and system 2 (i.e. neocortex-related system). The pattern of thought process for system 1 is characterized by unconsciousness, automatic, fast, perceptive, associative, pragmatic, parallel, sterotype, the results of which are more optimal; while the pattern of thought process for system 2 is characterized by awareness, control, seriousness of efforts, slow, analytical, procedural, logical, sequential, egalitarian, and the final result is more optimal. These characteristics for both the system were described in detail by Evans [14] and Epstein et al. [20]. In the brain mechanism, system 1 is influenced by the limbic system, the area of the brain responsible for emotions, whereas system 2 is driven by the neo-cortex, the area of the brain responsible for rational thoughts. Thus, according to these concepts and indicators (indicated characteristics) forty statements of relevant items were developed and used in this study. The set of items was designed in the form of self-report using a Likert scale, from 1 (strongly disagree) to 6 (strongly agree).

After the items were arranged including the procedures for their implementation, the researcher conducted a field trial. In this study, we identified problems emerged due to the implementation of collected data, and we also tested the validity of the construct using an exploratory factor analysis [6, 7]. In conducting exploratory factor analysis, there are two stages: factor extraction and factor rotation. At the factor extraction stage, the results are often not interpretable, therefore, a second stage of factor rotation is needed. In this research, factor extraction was carried out by the principal component analysis, while factor rotation was carried out by the oblique component. The method ensures that there is a correlation between the different factors. In other words, the internal factors are dependent.

2.2. Results

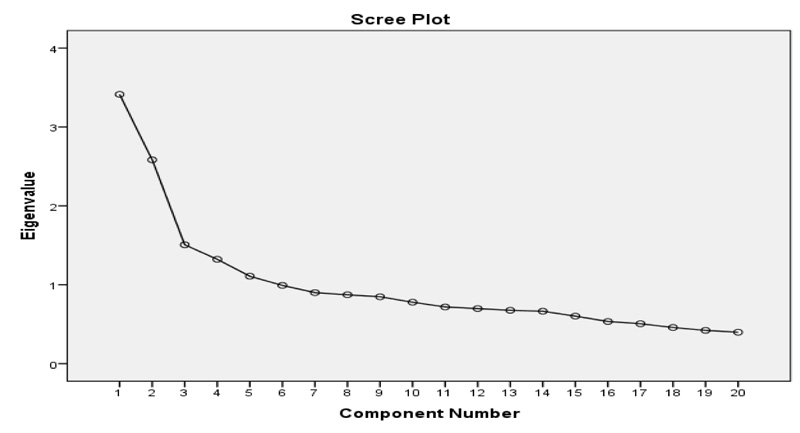

The factor analysis has shown that five factors of the IRS explain 52.57% of the total variance and their item loading factors are within 0.40 - 0.80. The loading factor is one important indicator to find out to what extent an item contributes to the total variance. According to Nunnally and Berstein [6], the loading factor is considered sufficient if ≥ .30. Additionally, the IRS factors are determined by eigenvalue > 1. A number of items that have a loading below .30 and an eigenvalue <1 were automatically eliminated. Thus, the selected items had a high enough feasibility, especially in terms of construct validity (Table 1).

| Items | Factors | ||||

|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | |

| i16 | .770 | ||||

| i17 | .733 | ||||

| i4 | .663 | ||||

| i7 | .634 | ||||

| i5 | .570 | ||||

| i11 | .530 | ||||

| i15 | .407 | ||||

| i13 | .791 | ||||

| i3 | .680 | ||||

| i14 | .642 | ||||

| i12 | .614 | ||||

| i1 | .752 | ||||

| i6 | .730 | ||||

| i2 | .619 | ||||

| i8 | .714 | ||||

| i18 | .608 | ||||

| i9 | .466 | ||||

| i19 | .806 | ||||

| i20 | .779 | ||||

| i10 | .386 | ||||

| Eigenvalues | 3.41 | 2.58 | 1.51 | 1.32 | 1.11 |

factor 1= effort, factor 2= evaluation, factor 3= tempo, factor 4= response, and factor 5= result.

Total number of selected IRS is 20. Specification of selected items is presented in appendix.

By using loading factor > .30 and eigenvalue > 1, we have 20 items categorized into 5 factors. To clarify, Fig. (1) illustrates the scree plot to determine the number of latent factors. Factor 1 (effort) explains 14.97% of the total variance, consisting of 7 items with has a loading factor of 0.40 - 0.77. Factor 2 (evaluation) explains 10.98% of the total variance, consisting of 4 items with loading factors 0.61 - 0.79. Factor 3 (tempo) explains 9.23% of the total variance, consisting of 3 items with loading factors 0.61 - 0.75. Factor 4 (response) explains 8.86% of the total variance, consisting of 3 items with loading factors 0.46 - 0.71. Factor 5 (result) explains 8.51% of the total variance, consisting of 3 items with loading factors 0.38 - 0.80.The naming of the five factors is related to the substance of the items in each factor by referring to dual-processing theory [13, 14]. In theory, the differences in thinking patterns are determined by individual efforts, willingness to evaluate, and the speed of responding to stimuli. At this stage, the IRS reliability was also estimated using Cronbach’s Alpha. The test was carried out simultaneously for 20 items of IRS. The test results show that the criterion of the IRS reliability is that it must be equal to 0.71. Referring to the reliability criteria [7, 34], the IRS is proven to be reliable.

3. STUDY II

3.1. Methods

At this second stage, the research aims to test the consistency of the IRS conceptual structure by using confirmatory factor analysis based on Structural Equation Modeling (SEM) with IBM Amos 23. In addition, the effect of thinking patterns (IRS) was also tested on academic performance variables. Participants at this stage of the research were students of the State University of Surabaya who came from the departments of psychology, economics, and science. Respondents were 344 students, including 64 men and 280 women with a mean age of 19.36 ±1.01 years.

Before filling out the questionnaire, the respondents were informed about the objectives and procedures of the IRS test in order to make data collection effective. The IRS consists of 20 bipolar items with 10 positive and 10 negative statements. The IRS response uses a Likert scale, ranging from 1 (disagree) to 6 (agree). If a score is close to 6, it means the respondents tend to have reflective thinking patterns and if a score is close to 1, it means the respondents tend to have intuitive thinking patterns. IRS filling is done by the respondents in ± 15 minutes. All collected data were verified to ensure their feasibility. Recoding was done on negative statements. The data were analyzed using confirmatory factor analysis based on SEM [7, 35].

It is assumed that a test as psychometric instrument has adequate validity and reliability; if it is a replicable test, its results are relatively consistent [4-6]. Therefore, after the confirmatory factor analysis, the relationship of thinking patterns with other relevant variables was determined. These variables of academic performance are indicators of curiosity [36] and grade point average (IPK, Indeks Prestasi Komulatif). IPK is calculated as the ratio of the score obtained in every subject matter weighted with the total number of class credit hours she/he took. The IPK has a scale ranging from 0 to 4. It is assessed at the end of each semester.

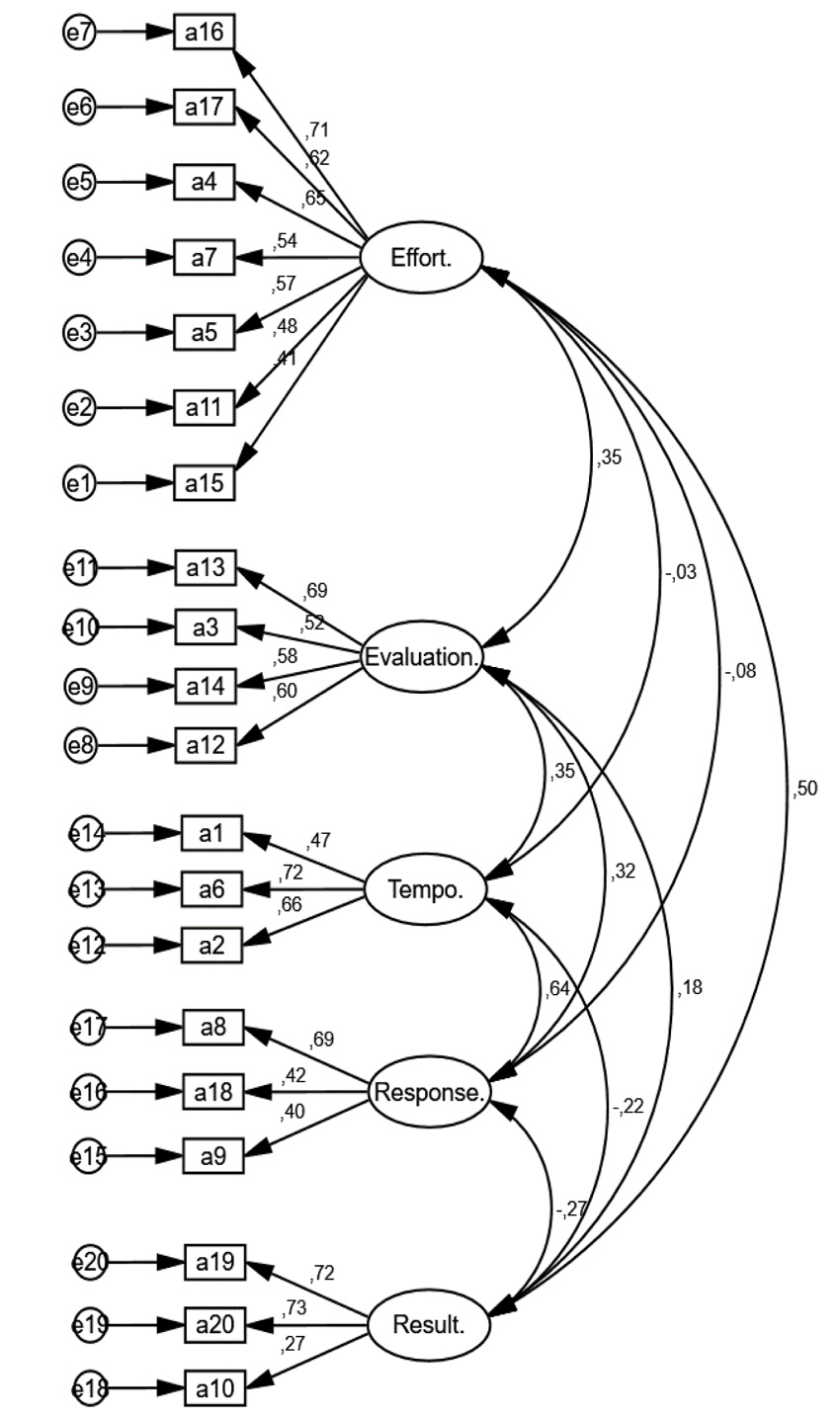

3.2. Results

The results of confirmatory factor analysis have shown that the conceptual structure of IRS is confirmed by the collected data. IRS consists of 5 factors, which include effort, evaluation, tempo, response, and result. All items correlate significantly with their respective factors (Fig. 2). In the effort factor, regression coefficients range between 0.41 - 0.71 with p<.01; factor evaluation has a regression coefficient of 0.52 - 0.69 with p<.01; the tempo factor has a regression coefficient of 0.47 - 0.72 with p<.01; response factor has a regression coefficient of 0.40 - 0.69 with p<.01; and the result factor has a regression coefficient of 0.27 - 0.73 with p<.01. Meanwhile, the interrelations between factors are also significant, except the interrelations between effort-tempo and effort-response factors.

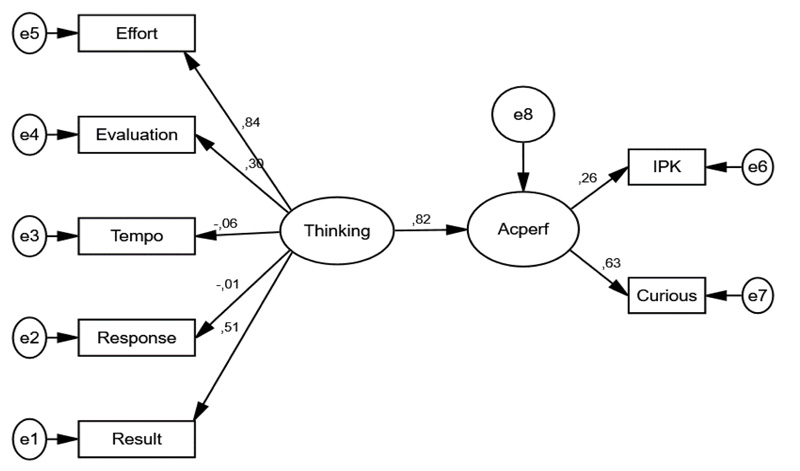

The analysis of the relationship of thinking patterns with academic performance is shown in Fig. (3). The results of SEM analysis prove that the thinking patterns measured by the IRS have a significant correlation with students’ academic performance, that is indicated by a regression coefficient of 0.82 with p<.01. Thus, the thinking pattern is an effective predictor of academic performance estimated via IPK and curiosity. In order to examine whether the model of structural relations between variables fit with the empirical data, the CFI (comparative fit index) and RMSEA (root mean square error of approximation) were used as well [7, 37]. Our results have shown that there is no discrepancy between the empirical data and the hypothesized mode of structural relation of variables (CFI = 0.96 and RMSEA = 0.07).

Observing the effect size between variables, both direct and indirect effects explain a fairly strong relationship (Table 2). The strongest direct effect of thinking pattern and the effort variable was detected (regression coefficient= 0.84, SE= 0.09,p<.01). Also, the significant direct effect of thinking pattern on the result variable (regression coefficient =0.51, SE= 0.27, p<.01) and the evaluation variable (regression coefficient =0.30, SE=0.66, p<0.01) was determined. The data explains that a person's thinking patterns are largely influenced by efforts to search and find solutions, evaluations of information and events, and the quality of the results obtained. Serious efforts and evaluation indicate that a person has reflective thinking, on the contrary, if both variables are weak, then a person has an intuitive thinking pattern [4, 14]. Likewise is the result variable, the reflective thinking tends to produce meaningful and satisfying decisions in the long run. Meanwhile, the intuitive thinking tends to produce decisions that are less meaningful and only satisfying in the short term. In addition, in

| Dependent Variables | Independent Variables | |||||||

|---|---|---|---|---|---|---|---|---|

| Thinking Pattern | Academic Performance | |||||||

| DE | IE | TE | DE | IE | TE | |||

| Academic performance | 0.82** (0.01) |

- | 0.82** (0.01) |

- | - | - | ||

| Curious | - | 0.51** (0.01) |

0.51** (0.01) |

0.63** (0.10) |

- | 0.63** (0.10) |

||

| IPK/GPA | - | 0.21** (0.02) |

0.21** (0.02) |

0.26** (0.25) |

- | 0.26** (0.25) |

||

| Effort | 0.84** (0.09) |

- | 0.84** (0.09) |

- | - | - | ||

| Evaluation | 0.30** (0.66) |

- | 0.30** (0.66) |

- | - | - | ||

| Tempo | -0.06 (0.91) |

- | -0.06 (0.91) |

- | - | - | ||

| Response | -0.01 (0.65) |

- | -0.01 (0.65) |

- | - | - | ||

| Result | 0.51** (0.27) |

- | 0.51** (0.27) |

- | - | - | ||

** p<0.01; the value in parentheses is standard error of regression

Table 2, there are data of significant indirect effect of thinking patterns on curious variables and GPA. The regression coefficients were equal to 0.51 for curious variable and 0.21 for GPA (p<0.01). The data explains that the individuals with the reflective thinking pattern tend to have a high desire to gain knowledge and will achieve high academic performance as well.

It is interesting to know whether there is a correlation between IRS and CRT analyses. We have discussed above that the CRT analysis is commonly used to measure thinking patterns, but it has several weaknesses. This research has determined the relationship between the IRS and CRT. The correlation test results showed that the IRS correlated with the CRT coefficient 0.088 with p= 0.001. That is, IRS has validity equivalent to CRT, both methods can be used to measure one's thinking patterns. However, when the predictive power for these two instruments was determined using regression analysis, we found that the IRS could also predict a student’s success in academic performance (Table 3), while the CRT cannot predict.

| - | Unstandardized Coefficients | Standardized Coefficients | t | Sig. | |

|---|---|---|---|---|---|

| B | Std. Error | Beta | |||

| (Constant) | 3.039 | 0.065 | - | 46.689 | 0.000 |

| IRS | 0.005 | 0.001 | 0.204 | 6.562 | 0.000 |

| CRT | 0.009 | 0.006 | 0.048 | 1.540 | 0.124 |

4. DISCUSSION

Through an in-depth examination process, starting from exploratory factor analysis, confirmatory factor analysis, and SEM, this research succeeded in developing a scale of thinking patterns that meets psychometric criteria. The IRS has 20 items (see appendix) that are bipolar-related, consisting of 5 factors, namely: effort, evaluation, tempo, response, and result. These factors reflect the attributes of thinking as demanded by a good instrument [4-6]. We identified a number of characteristics such as efforts in searching and finding solutions, speed of responding to stimulus, and evaluation of stimulus, and we have also found new aspects in the thinking process that have been overlooked so far, namely regarding “results” as a dependent variable of thinking patterns. The intuitive thinking patterns often produce dissatisfaction, loss, and even the feeling of regret; while reflective thinking has a greater chance of producing satisfaction [17].

IRS has a loading factor of 0.40 - 0.80 with an eigenvalue greater than 1 and explains 52.57% variance, and has a reliability of 0.71. IRS as a psychometric indicator of thinking process is equivalent to REI which consists of 10 items. REI has a loading factor of 0.59 - 0.79 with an eigenvalue greater than 1 and explains 48.2% of variance, with reliability of 0.72 and 0.73 [20]. The development of IRS is not only based on statistical analysis that is commonly used in the preparation of scala such as inter-item correlations, regression analysis, and Cronbach's Alpha reliability [34], but it includes a more sophisticated approach, namely using SEM. By using SEM, the conceptual relations model between variables can be seen simultaneously [7, 37]. The test results show a little difference between the conceptual model and research observed data with CFI= 0.96 and RMSEA= 0.07.

IRS is an alternative instrument to measure a person's cognitive pattern. It is known that to measure cognition, CRT is a commonly used instrument [1, 9, 30], although it has many weaknesses [12]. A number of researchers have identified CRT’s weaknesses, such as it is more like intelligence tests, in the form of numerical calculations, and there is an aspect of familiarity that affects the validity of the results. Thus, the results of our investigation are in agreement with some previous studies [1, 12]. Our results have proven that IRS is an effective predictor of academic performance. The relationship between thinking patterns and academic performance is a necessity [24, 38] and that is supported by our finding as well. Thereby, the thinking pattern should directly relate to the learning achievements in students.

What are the implications of this research? Identification of thinking patterns by using good instruments becomes urgent in the education and training of individuals, regarding decision-making. It has been reported that humans do not have an encouraging track record in making decisions and tend to be biased in thinking [39]. Many initial decisions become inappropriate after in-depth evaluation of the facts and truths. For instance, it is described that 44% of advocates do not recommend their children to choose the same careers, 40% of executives leave their jobs in the first 18 months, and more than half of the teachers leave their jobs in the first 4 years [40]. In our recent investigation, 13% of students made the wrong choice of the education program. The highest rate occurred in the faculty of social sciences by 26%, followed by the faculty of language and arts by 18%. Regarding the choice of profession, 63% of the students said they would choose a non-educational profession and only 37% chose the teaching profession [41]. Many facts reported in the literature affirm that individual decision making tends to be inaccurate and often leads to regret. The dominated role of limbic system in the process of the intuitive thinking is incontestable.

We designed IRS analysis and used it to describe the thinking pattern in students population. This is to be considered that the problem of thinking is not only the monopoly of the education community [38, 42], but it exists in almost in all areas of life including politics, economics, and government bureaucracy. Thus, in the future, other researchers could implement similar methods and procedures for identification of “typical thinking patterns” in other population groups and compare them. It is possible that there could be a difference in structure on thinking patterns in various professionals .

CONCLUSION AND IMPLICATIONS

This research has answered a number of problems that are of concern to researchers related to the use of CRT as an instrument to measure thinking models. This research has succeeded in developing a scale of thought patterns which we later call IRS. The instrument has a conceptual relationship structure consisting of 5 factors with a loading factor of 0.40 - 0.80. The five factors explain 52.57% of the total variance. From the reliability testing, IRS has a Cronbach’s Alpha of 0.71. IRS is correlated and is a significant predictor of students’ academic performance. In terms of the conceptual relationship model, the IRS has been tested using SEM and proven to be fit with empirical data. In connection with the results of this research, it is recommended that the IRS can be used as an alternative to measure individual thinking tendencies. As an instrument, the IRS needs to be further tested by involving other relevant variables, such as socioeconomic level, education level, parenting, leadership patterns, and successful performance.

ETHICS APPROVAL AND CONSENT TO PARTICIPATE

Not Applicable.

HUMAN AND ANIMAL RIGHTS

Not Applicable.

CONSENT FOR PUBLICATION

All patients participated on a voluntary basis and gave their informed consent.

AVAILABILITY OF DATA AND MATERIALS

Data is available but cannot be shared.

FUNDING

None.

CONFLICT OF INTEREST

The authors declare no conflict of interest, financial or otherwise.

ACKNOWLEDGEMENTS

Declared none.

APPENDIX

| S.No | Items |

| 1 | I am used to making decisions quickly |

| 2 | For me, the important thing is that the work is finished |

| 3 | My work is generally not as expected |

| 4 | For me, the important decision is immediately taken |

| 5 | I prefer something whose benefits can immediately be felt |

| 6 | I prefer to use the existing one rather than create a new one |

| 7 | I don't like difficult things even though I know it's important |

| 8 | From a number of decisions that I made, in the end there were many mistakes |

| 9 | I don't like things that require complex calculations |

| 10 | If something offends me, I react quickly |

| 11 | I usually try hard to find a solution |

| 12 | I am used to giving more attention to the quality of work |

| 13 | I need to evaluate every incident that befalls me |

| 14 | I usually need time to find a solution |

| 15 | Although several times failed, I kept trying to find a solution |

| 16 | I like challenges even though it's hard to do |

| 17 | I try to gather as much information as possible to make decisions |

| 18 | In deciding something, I need deep consideration |

| 19 | Most of the results of my work are very satisfying |

| 20 | Other parties respond positively to something I do |